Sports leagues and broadcasters have spent the past few years wrestling with a stubborn trend: for some sports, live viewing is slipping, particularly among younger audiences. As attention fragments across short-form video, social platforms, and interactive entertainment, the traditional broadcast camera cut can feel less immersive than the experiences fans get from modern video games.

Peripheral Labs, a Canada-based startup, believes it has a practical way to close that gap. The company is bringing volumetric video and photorealistic 3D reconstruction to live sports by borrowing a playbook from an unexpected place: the sensor and perception systems developed for self-driving cars.

From autonomous driving to immersive sports

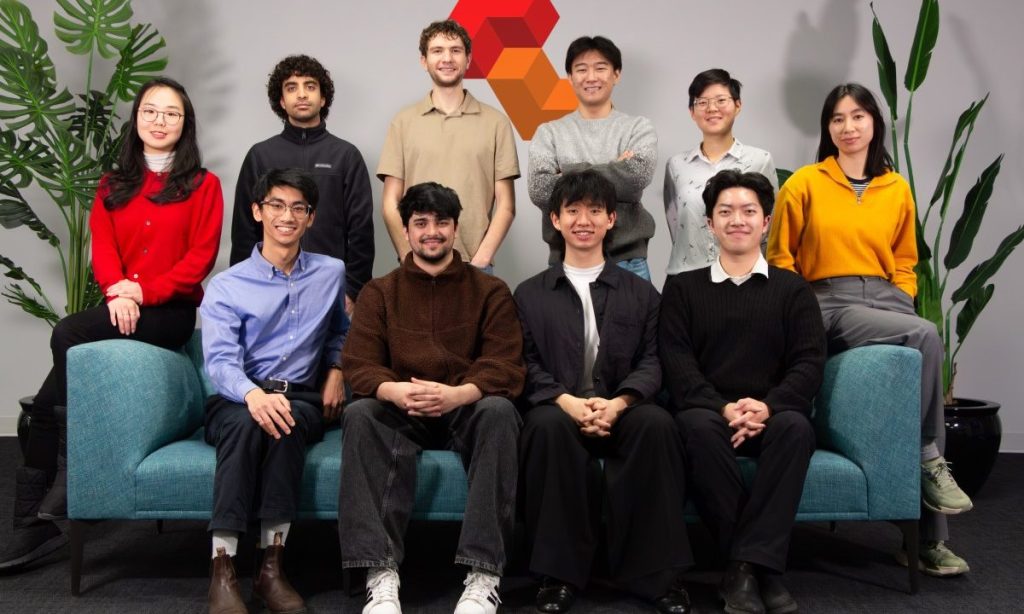

Peripheral Labs was founded in 2024 by Kelvin Cui and Mustafa Khan, two engineers with deep experience in robotics and vehicle perception. Both worked on the University of Toronto’s driverless car team, a background that shaped how they think about reconstructing complex, fast-moving scenes in real time.

Mustafa Khan previously worked as a researcher at Huawei, while Kelvin Cui has experience at Tesla as a software engineer focused on chassis systems. Their sports fandom helped connect their technical expertise to a consumer problem: making live games feel more interactive, controllable, and personalized.

In comments to TechCrunch, Cui described how Khan’s work on 3D reconstruction sparked the idea of watching sports in a “free-flowing, multi-angle” way, closer to how fans move a camera in a game replay. That insight became the foundation for the startup’s product direction.

Why volumetric video has been expensive

The concept of volumetric video in sports isn’t new. High-end productions have shown how 3D capture can let viewers see a play from multiple angles, pause at key moments, or reframe the action in ways a standard broadcast cannot. The barrier has been cost and complexity.

Traditional volumetric setups can require more than 100 cameras around a venue to capture enough data for a convincing 3D reconstruction. That creates major hurdles for teams and leagues: hardware purchases, installation, calibration, maintenance, data processing, and staffing. For many organizations, the economics only work for the biggest events or the largest markets.

Peripheral Labs’ bet: fewer cameras, smarter perception

Peripheral Labs says it can reduce the number of cameras needed from more than 100 to as few as 32 by applying computer vision techniques and perception concepts developed for autonomous vehicles. In the self-driving world, systems are designed to interpret depth, motion, and occlusion in messy real-world environments. Sports, with its constant movement, partial obstructions, and tight timing, presents a similar technical challenge.

The founders argue that recent advances in AI models and vision systems make this approach more feasible at scale. Reducing the camera count is not just a hardware savings; it can lower operational overhead and simplify deployment, which matters for leagues that want consistent coverage across multiple venues.

The company’s business approach, according to the report, is to keep hardware costs as low as possible for teams and broadcasters and secure multi-year contracts for its platform. That suggests a strategy aimed at becoming infrastructure rather than a one-off production add-on.

What fans and broadcasters could actually do with it

The promise of volumetric sports video is not merely “more angles.” It is a different kind of viewing control. Peripheral Labs is building a software platform designed to let broadcasters and fans manipulate a reconstructed scene in ways that feel closer to interactive media.

Interactive viewing controls

- Track a specific athlete during a play, such as following only the ball carrier or a defender’s movement off the ball.

- Freeze critical moments and rotate the view to inspect positioning, contact, or spacing.

- Revisit controversial sequences, such as a potential foul, from new viewpoints to better understand what happened.

Biomechanics and next-level stats

Beyond visuals, the platform is positioned to add biomechanical data and performance statistics derived from the company’s own sensor stack, described as similar to self-driving car sensors that capture a scene with depth. If executed well, this could create a bridge between broadcast storytelling and the data-rich analysis fans increasingly expect.

For leagues, that could mean new content formats for social clips, enhanced highlight packages, or premium analysis feeds. For broadcasters, it may offer fresh tools to explain tactics and break down moments without relying exclusively on 2D telestration.

The bigger industry backdrop: engaging Gen Z without alienating everyone else

The push toward interactive viewing reflects a broader industry reality: younger fans often want agency, personalization, and shareable moments. Many leagues have already experimented with alternate broadcasts, real-time stats overlays, and companion experiences. Volumetric video, if it becomes cheaper and easier to deploy, could be another layer in that evolution.

Still, the technology has to fit into real production workflows. Broadcasters will care about reliability, latency, and how seamlessly 3D replays integrate with standard camera feeds. Teams and leagues will weigh whether the experience drives measurable engagement, subscription value, or sponsorship opportunities.

What to watch next

Peripheral Labs is entering a market where the appetite for innovation is real, but budgets and operational constraints remain unforgiving. The key test will be whether the startup can consistently deliver high-quality 3D reconstruction with fewer cameras, and whether its sensor-driven data layer becomes a must-have feature rather than a novelty.

If it succeeds, the result could be a new middle tier of volumetric sports production: not limited to marquee events, but accessible enough for broader league adoption, and compelling enough to make live viewing feel interactive again.