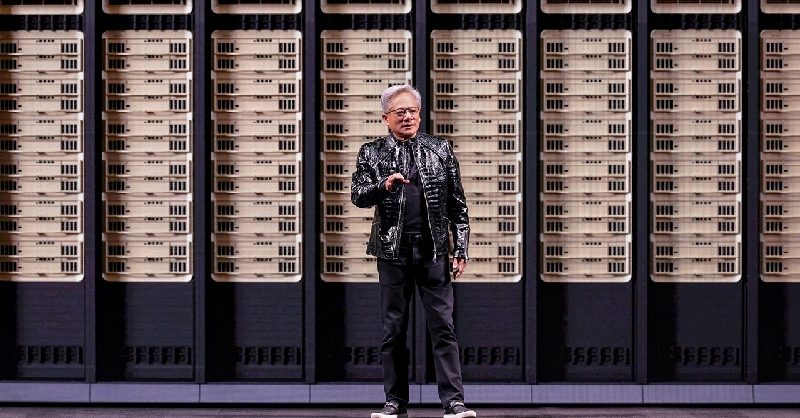

Nvidia doubles down on AI platforms at CES 2026

At CES 2026, Nvidia used the world’s biggest consumer tech stage to signal where the next wave of computing is heading: tightly integrated AI platforms that span chips, software, robotics and vehicle autonomy. The company introduced its new Rubin platform, a next‑generation Vera CPU family, a robotics stack called GR00T, and an advanced autonomous driving suite branded Alpamayo L4.

The announcements underline Nvidia’s ambition to be more than a graphics or accelerator vendor. Instead, it aims to be the central infrastructure provider for everything from data centers and humanoid robots to fully autonomous vehicles.

Rubin platform: Nvidia’s next AI-compute foundation

The newly unveiled Rubin platform is positioned as the successor to Nvidia’s current flagship AI systems. While detailed specifications were not fully disclosed at CES, the company framed Rubin as a complete AI computing platform rather than a single chip.

A full-stack approach to AI infrastructure

According to Nvidia, Rubin will integrate cutting-edge GPU accelerators, high‑bandwidth memory, advanced interconnect technology and a tightly coupled software stack. The platform is designed to handle the escalating demands of large language models, multimodal generative AI, and complex digital twins.

By emphasizing a full-stack design, Nvidia is reinforcing a strategy that has already paid off in the data center: sell not only silicon, but also the compilers, AI frameworks, orchestration tools and libraries that make the hardware indispensable.

Vera CPU: Nvidia’s push deeper into general-purpose compute

The introduction of the Vera CPU family signals that Nvidia wants a larger slice of general-purpose data center and edge computing workloads, not just accelerated AI tasks.

Designed for heterogeneous AI systems

Vera CPU is described as a high-performance, energy‑efficient processor intended to sit alongside Nvidia GPUs in heterogeneous systems. The company is targeting cloud computing, AI inference, and edge computing scenarios where tight coupling between CPU and GPU is critical for performance and cost efficiency.

By owning both sides of the compute equation, Nvidia can optimize system architecture, memory access patterns and AI workloads end‑to‑end. That approach challenges incumbent CPU providers and could further lock major cloud platforms into the Nvidia ecosystem.

GR00T: a robotics stack for the age of embodied AI

Among the most eye‑catching announcements was GR00T, a new robotics platform aimed at accelerating the development of embodied AI — systems where AI models control physical machines in the real world.

From simulation to real-world deployment

GR00T is framed as a complete robotics stack that spans simulation, training and real‑world deployment. It leverages Nvidia’s existing strengths in 3D simulation and digital twins, allowing developers to prototype and test robot behaviors virtually before pushing them to physical hardware.

The platform is expected to support a wide range of form factors, from industrial robots and warehouse automation systems to emerging humanoid robots designed for logistics, manufacturing and service roles. By standardizing core components such as perception, motion planning and control algorithms, GR00T aims to lower the barrier to entry for robotics startups and established OEMs alike.

Alpamayo L4: Nvidia’s leap toward full vehicle autonomy

The final major pillar of Nvidia’s CES 2026 showcase was Alpamayo L4, an advanced autonomous driving platform named after one of the Andes’ most famous peaks. The “L4” label refers to Level 4 autonomy, where a vehicle can operate without human intervention in defined conditions or geofenced areas.

Level 4 autonomy for next-generation vehicles

Alpamayo L4 builds on Nvidia’s existing Drive platforms, combining high-performance system-on-chip designs with a sophisticated software stack for perception, prediction and planning. The system is intended for robotaxis, autonomous shuttles and commercial fleets that require robust sensor fusion and real-time decision-making.

Automakers and mobility providers are under pressure to move beyond advanced driver assistance and toward genuine self-driving capabilities. By offering a pre‑integrated platform, Nvidia is positioning Alpamayo L4 as a shortcut to deployment, reducing the need for each manufacturer to build a complete autonomy stack from scratch.

Strategic implications for the AI and autonomy race

The Rubin, Vera CPU, GR00T and Alpamayo L4 announcements collectively underscore how Nvidia is stretching its reach across the entire AI value chain. From cloud data centers and edge devices to robots and autonomous vehicles, the company is building interlocking platforms designed to keep developers and enterprises within its ecosystem.

For competitors in semiconductors, cloud infrastructure, robotics and automotive technology, the message from CES 2026 is clear: matching Nvidia will require not just individual chips, but cohesive platforms, robust software ecosystems and long-term investment in AI research and tooling.

As regulators, automakers, industrial firms and cloud providers digest the announcements, the coming years will show whether Nvidia’s full‑stack bet pays off — and how quickly the new Rubin, Vera CPU, GR00T and Alpamayo L4 platforms move from CES stage demos into large-scale, real‑world deployment.