Neurophos lands $110M to redefine energy-efficient AI compute

Neurophos, a next-generation semiconductor startup, has raised $110 million to develop AI accelerator chips that promise to make large-scale AI compute up to 100x more energy efficient. The funding underscores mounting pressure on the AI industry to curb soaring power consumption as demand for advanced models and data-intensive applications explodes worldwide.

The investment comes at a time when hyperscale data centers and cloud providers are grappling with the physical and economic limits of traditional GPU-based architectures. By using advanced photonic computing and novel chip architectures, Neurophos aims to deliver the performance required for cutting-edge AI while dramatically reducing the energy footprint.

Why AI’s energy problem is becoming unsustainable

The rapid adoption of large language models, generative AI, and real-time AI inference has triggered an unprecedented surge in data center energy demand. Training and running state-of-the-art models requires thousands of high-end GPUs, each drawing hundreds of watts, multiplied across massive server farms.

Industry analysts warn that AI-related electricity usage could rival that of small countries within a few years if current trends continue. Power constraints are already influencing where new data centers can be built, how quickly cloud capacity can be added, and what kinds of AI services are economically viable.

Against this backdrop, the promise of a 100x improvement in energy efficiency is not merely an incremental gain; it represents a potential reset in how AI infrastructure is designed and deployed. If Neurophos can deliver on its claims, it could ease grid pressure, lower operational costs, and make advanced AI accessible to a far broader range of industries and regions.

Inside Neurophos’ approach to ultra-efficient AI hardware

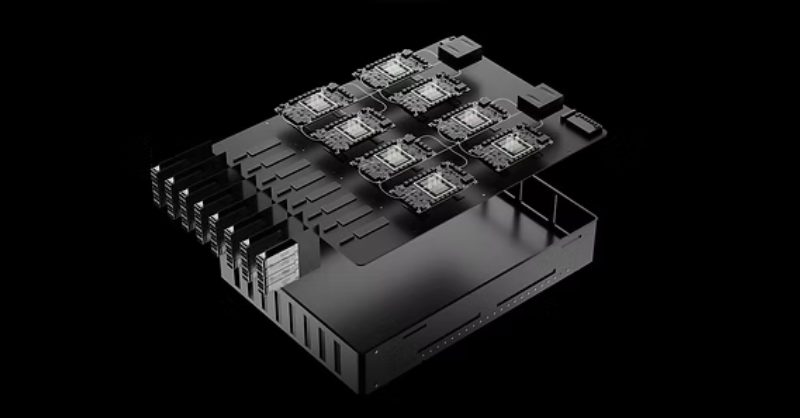

While the company has not disclosed every technical detail, Neurophos is understood to be working on a new class of AI accelerators that depart sharply from conventional electronic-only designs. Instead of relying purely on electrons moving through silicon, the startup is believed to harness photonics—using light to perform certain computations and data movement.

Leveraging photonics for AI workloads

In traditional chips, data must be shuttled between memory and compute units, a process that consumes significant energy and generates heat. In contrast, photonic interconnects and optical computing elements can move and process information with far lower losses and higher bandwidth.

For AI workloads dominated by matrix multiplications and tensor operations, this architecture can be especially powerful. By tightly integrating specialized AI cores with high-speed optical pathways, Neurophos aims to reduce the energy cost per operation while maintaining or even surpassing the throughput of modern GPUs.

Targeting both training and inference

The company is positioning its technology for both AI training—the compute-intensive process of building models—and AI inference, where models are deployed in production to serve millions of users. Training large models can require weeks of continuous compute time, while inference must be ultra-responsive and cost-efficient.

By optimizing across both phases, Neurophos is seeking to become a foundational player in the AI hardware stack, not just a niche accelerator for narrow workloads.

Strategic investors eye long-term AI infrastructure shift

The $110 million raise signals strong confidence from investors that the current GPU-centric landscape is ripe for disruption. While specific backers were not detailed in the source text, such a round typically attracts a mix of venture capital firms focused on deep tech, strategic investors from the semiconductor and cloud computing sectors, and possibly sovereign or infrastructure-focused funds looking at the long-term impact of AI on energy grids.

These investors are betting that hyperscalers, cloud platforms, and AI-first enterprises will be forced to rethink their hardware roadmaps as energy costs and sustainability pressures mount. A solution that can dramatically lower total cost of ownership (TCO) while supporting the next wave of AI models would be strategically invaluable.

Positioning in a crowded AI chip race

Neurophos is entering a fiercely competitive field. Established players like NVIDIA, AMD, and Intel, alongside cloud giants such as Google, Amazon, and Microsoft, are all developing custom AI accelerators. Numerous startups are also pushing innovations in neuromorphic computing, analog AI, and domain-specific architectures.

The company’s differentiation hinges on its claimed 100x energy efficiency advantage and the maturity of its hardware-software stack. For widespread adoption, Neurophos will need robust developer tools, compatibility with leading AI frameworks, and seamless integration into existing data center and cloud environments.

Implications for data centers, climate goals and AI access

If Neurophos can scale production and validate its performance claims in real-world deployments, the impact could be felt across the entire digital ecosystem.

Lower energy bills and denser compute

For operators of large data centers, more efficient AI hardware translates directly into lower electricity bills, reduced cooling requirements, and the ability to pack more compute into existing facilities. This can delay or reduce the need for new data center builds, which are increasingly constrained by grid capacity and regulatory scrutiny.

Supporting sustainability and ESG commitments

Enterprises and cloud providers are under mounting pressure to meet net-zero and broader ESG targets. AI is both a tool for optimizing energy systems and a major new source of demand. Hardware that significantly cuts AI-related carbon emissions will be central to keeping corporate climate pledges credible as AI usage grows.

Democratizing advanced AI

By lowering the cost of running powerful models, Neurophos-style accelerators could help democratize access to advanced AI capabilities. Smaller companies, research institutions, and emerging markets often struggle with the high cost of GPU-based compute. More efficient chips can make it feasible to deploy sophisticated AI in fields such as healthcare, education, manufacturing, and public services without prohibitive infrastructure investments.

What comes next for Neurophos

With $110 million in fresh capital, Neurophos is expected to accelerate product development, expand its engineering teams, and deepen collaborations with early adopter customers. The coming 12–24 months will be critical as the company moves from lab prototypes to production-grade silicon and large-scale pilot deployments.

As AI continues to reshape industries and strain energy systems, the race to build radically more efficient AI infrastructure is intensifying. Whether Neurophos becomes a cornerstone of that new infrastructure will depend on how quickly it can translate its technological promise into reliable, deployable hardware at scale.